UX Team of One - Usability testing

In my previous post I've briefly touched the usability testing phase. Let's dig deeper into this phase.

Usability testing phases

| Phase | Deliverable |

| 🕵️ Find the potential issues |

|

| 📄 Design research document |

|

| 🛒 Hiring process |

|

| 🧱 Creating the prototype |

|

| 🎤 Usability testing sessions |

|

| 📊 Data analysis |

|

| 📽️ Usability testing presentation |

|

1. Find the potential design issues

There are many ways how you can find the issues in the digital product. Choose the proper method according to your seniority and according to your deadline :)

Desk research

You can find a lot of customer reportings of issues in different sources:

- Forums

- Customer reviews

- Customer complains in emails

Stakeholder interviews

Try to find someone who is in a close touch with the customers. You can talk to various roles such as:

- Customer care

- Customer support

- Sales

Heuristic analysis

If you're more senior UX Designer and if you have some awareness of the use case scenarios you can perform Heuristic analysis.

2. Design research document

The most important thing is that you know why you need to do the usability testing. You know your unknowns which you hope will be answered at the end of this research. Plan your research tasks in advance and create the research design. Design research document can consits of these bellow sections.

Research summary

Summarise the problem why you need to do the research.

Research goal

What are the main goals which motivates you to do the research. Your goals are usually motivated by the unknowns.

Research questions

Articulate your main research questions and possible subquestions. Your research questions will be the basic elements of the research design document.

Research questions are not the questions for the participant.

Example of a research question. "Are the users able to create the post?"

Session script

Create a list of tasks which will be binded to your research questions.

"(RQ1) - Imagine you are a new content editor. Create your first post" You shouldn't add tasks which are not connected to any research question.

Participant profile

The profile of your ideal participant.

3. Hiring process

According to this nngroup article you should do usability testings with only five participants. The theory says that having more participants wouldn't bring you any new issues.

From my previous experiences hiring process is the most dificult task among other usability testing tasks.

You will need to:

- Find the proper particpants according to your participant profile

- Organize the meetings so that they can fit into yours and their free time slots

- Wait for their answers and hope they will get back to you before the deadline

Considering the complex tasks mentioned above.

You should start with the hiring process as soon as possible. Ideally when you will be done with your research questions.

When I could choose one task which I would love to outsorce it would be this one.

I use mainly Calendly for organizing the meetings with the participants. The benefit of this tool is that multiple participants can choose the time slots which are not conflicting with each other.

4. Creating the prototype

Figma is handy for creating simpler prototpes where you don't need to deal with complex user interactions. If you would like to create a prototype of e-shop and simulate the buying process Axure is more handy prototyping tool.

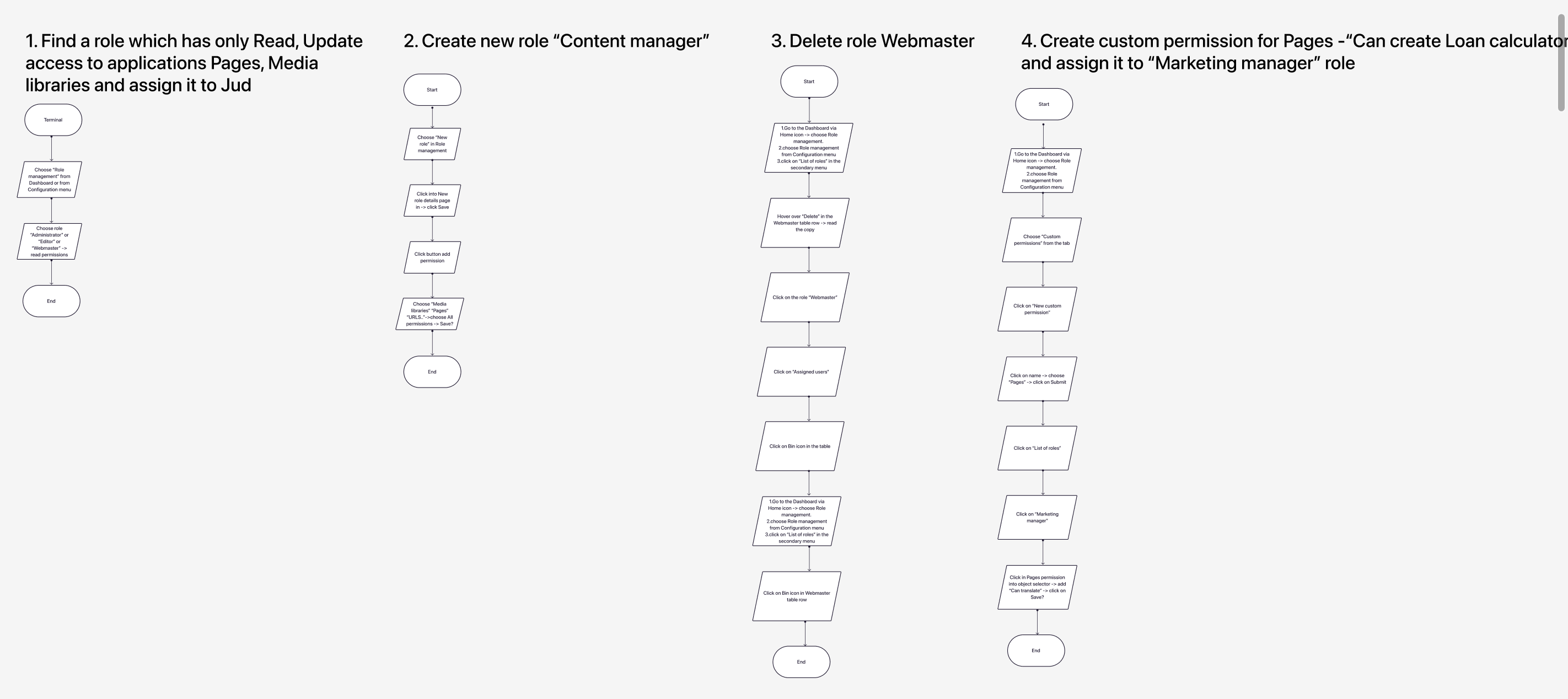

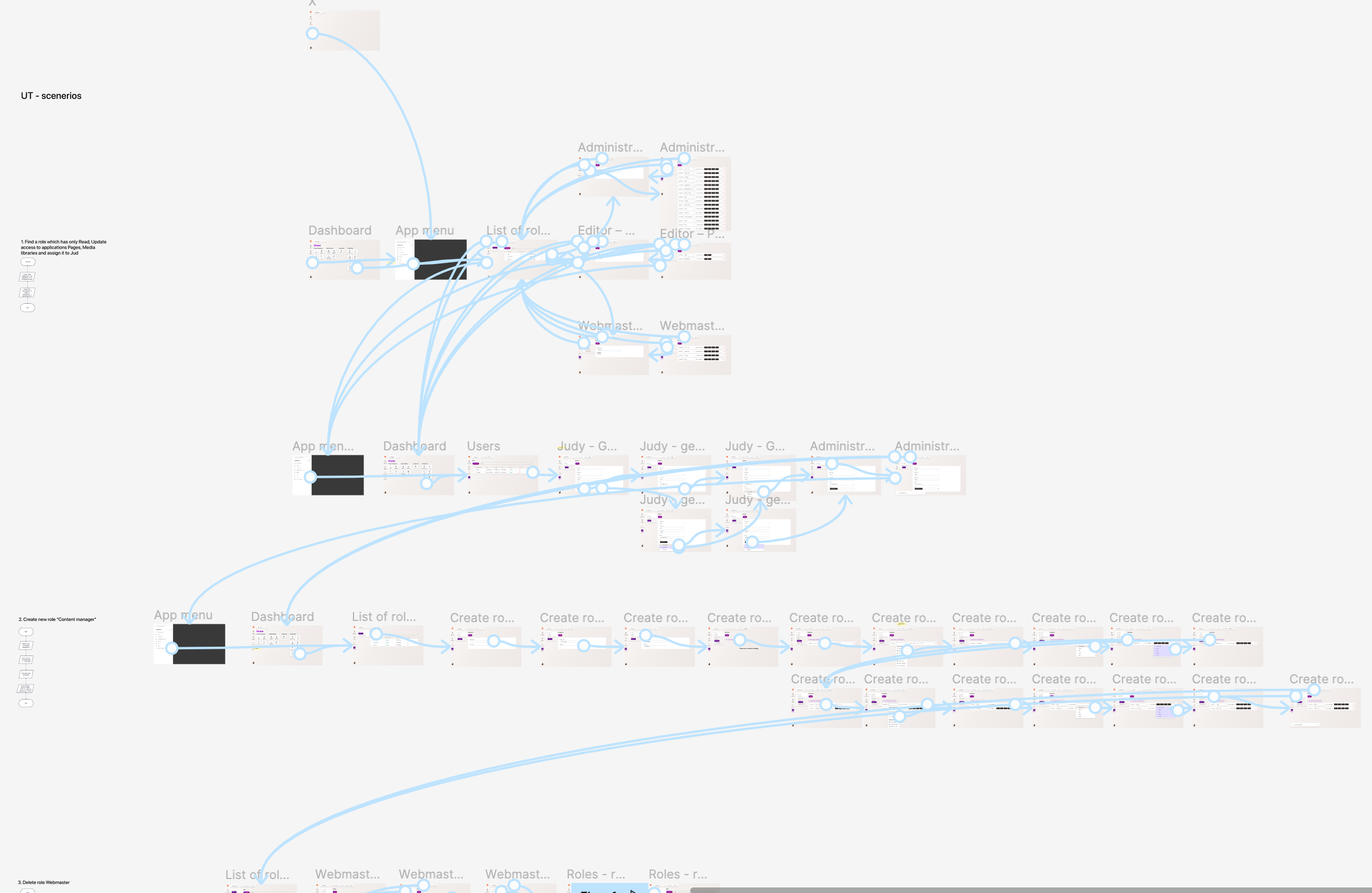

At first I create the workflows for the testing tasks and than I create the connected mockups.

5. Facilitating the testing session

Few notes for the beginning facilitators

- Try to find some extra participant for the pilot testing

- Try not to use the word "task" during the session too often. You don't want the participant to feel like being tested. Especially if you tell him "There are not wrong or correct answers - we're not testing you".

- Shut up and listen to the participant. If the participant will be silent don't jumnp start and break the silence. Be patient with tha participant and wait. You can count till six. After six you can break the silence and explain to the participant how the task should be done.

- I have good experiences with the testing sessions where the participants read the task description on their own. If you read the description for them you could influence them by your tone of voice.

Interview session checklist for the facilitator

✅ Introduction

- Introduce yourself and describe the context

- Icebraking questions

- How is the weather in your country?

- How was your travel to our office?

- Do you have any plans for the weekend?

- Calm down the participant you want him to feel comfortable with you:

- "Please remember that there are no wrong or correct answers.

- We don't test you but our designers.

- You can leave any time you wish.

- Ask for the consent to record the session. "Can we record this session? We would like to get back to the session later on.."

✅ Record the session

✅ Testing scenarios and tasks

- Ask the participant to read the task description alone and ideally aloud

- When the participant will be done with the task ask him to rate the task on scale from one to seven (SEQ question). "Please rate the task on a scale from one to seven where seven is the easiest task and one is the hardest one." (this is the only time when you are allowed to say the word task)

✅ Debrief

- Ask the participant to summarise all the task. "If you could change one thing in this prototype what would it be?"

- Let the participant think it through maybe he will have some interesting questions - "Would you like to ask some questions which you think should be raised?"

- Say thanks and don't forget to give to the participant some incentive.

6. Analyse the data and find the patterns

Self reported metrics - SEQ

Go through the individual recordings and evaluate the participants using the SEQ scale too. The participants might be overconfident when they rate themselves.

You can use SEQ scores:

- Calculate the average score for the whole usability testing

- Calculate the average score for the individual tasks

Performance based on success of the tasks

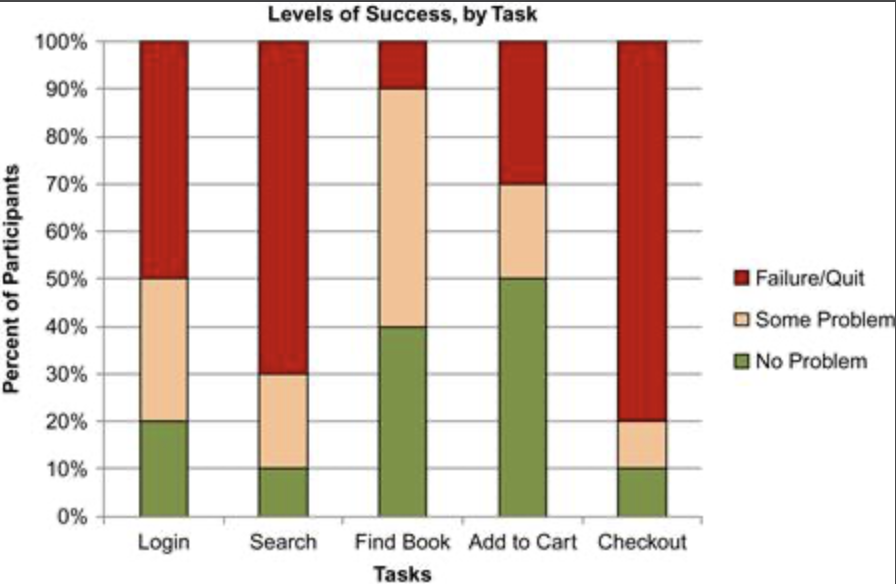

You can use for example this bellow scale for rating the performance of the individual tasks:

- Passed

- Passed with limitations

- Failed

You can also show some nice graph to visualuse the performance over all the tasks.

Prioritised list of the issues

You can calculate the severity of each issue by multiplying these attributes:

- Criticality score - this score simply says how critical the task is for the business (Fibonacci scale):

- 1 (not critical at all)

- 2

- 3

- 5

- 8

- Impact score - what is the impact of the issue on the task:

- 1 (suggestion by the user - almost no impact)

- 2

- 3

- 5

- Issue frequency - Number of participants epxeriencing the issue / total number of participants

Prioritised list of the solutions

Calculate the ROI for the solutions. Try to figure out more solutions for the issues.

Solution ROI (Effectivness/complexity)

- Effectivness - the sum of all issue severities which will be fixed by this solution

- Complexity - technical complexity of the suggested solution. You can use Fibonacci scale again:

- 1

- 2

- 3

- 5

- 8

7. Present the results - prioritised list of the issues and solutions

You will need to do the reality check and present your results to the stakeholders. It wouldn't make no sens to do the usability testing without presenting it to the wider audience. Remember your goal is to help to the stakeholders with to make the the decisions how to proceed with the product.

Sources

- https://www.toptal.com/designers/usability-testing/turning-usability-testing-data-into-action

- Just Enough Research by Erika Hall

- Measuring the User Experience